|

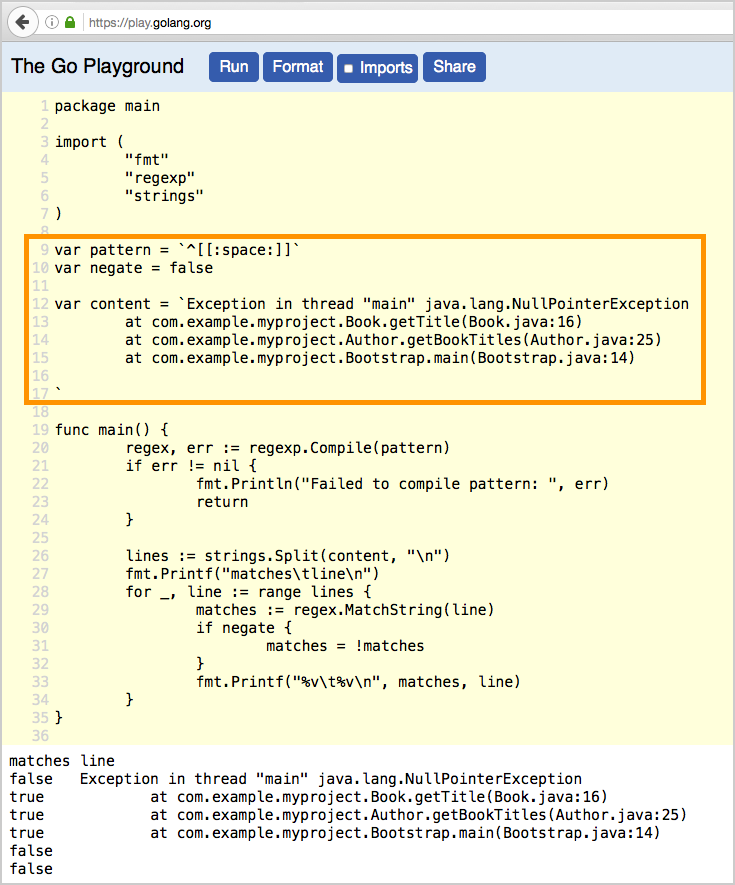

If your data is cleaner and sticks to a simple line per entry format, you can pretty much ignore the multiline settings. From there, Filebeat will just queue up any unmatched lines and prepend them to the final line that matches the pattern. Since the formatting for this data set is not super strict, with inconsistent use of double quotes and a number of newlines sprinkled in, the best option was to look for the end of an entry, which consists of a numeric ID followed by an inspection type without much variation or double-quotes/newlines. The only parts I’ll call out specifically are the multiline bit and the Elasticsearch configuration piece. # Optional protocol and basic auth credentials.Įverything’s pretty straightforward here you’ve got a section to specify where and how to grab the input files and a section to specify where to ship the data. # Identifies the last two columns as the end of an entry and then prepends the previous lines to it # Ignore the first line with column headings For our scenario, here’s the configuration that I’m using. Once you’ve got Filebeat downloaded (try to use the same version as your ES cluster) and extracted, it’s extremely simple to set up via the included filebeat.yml configuration file. The first step is to get Filebeat ready to start shipping data to your Elasticsearch cluster. As you’re about to see, Filebeat has some built-in ability to handle multiline entries and work around the newlines buried in the data.Įditorial Note: I was planning on a nice simple example with few “hitches”, but in the end, I thought it may be interesting to see some of the tools that the Elastic Stack gives you to work around these scenarios.

(30.422862, -97.640183)",10051637,Routine InspectionĭOH… This isn’t going to be a nice, friendly, single line per entry case, but that’s fine. Wieland Elementary,78660,100,"900 TUDOR HOUSE RD Restaurant Name,Zip Code,Inspection Date,Score,Address,Facility ID,Process Description

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed